Data Centers in Space: Promise, Limits, and Reality

Data centers in space are entering the conversation as the limits of terrestrial data infrastructure come into sharper focus; space emerges as a provocative new frontier for computing. From orbital data centers powered by continuous solar energy to experimental missions like Starcloud’s latest project, the idea of processing data beyond Earth is moving from speculation toward early validation. This blog explores what space-based data centers are, why they’re being taken seriously, and how close the industry really is to its final frontier.

The concept of extending computing infrastructure beyond Earth has increasingly shifted from science fiction to a topic of early-stage strategic discussion. Prominent technology leaders, including Elon Musk and Jeff Bezos, have argued that in the long term, energy-intensive industries may need to move off-planet to preserve Earth’s limited resources. Bezos has even suggested that gigawatt-scale data centers could begin operating in space within the next 10 to 20 years, citing the advantages of uninterrupted solar energy and the absence of weather disruptions in orbit.

As terrestrial data center expansion faces increasing scrutiny due to energy consumption, land use constraints, and environmental impact, attention has begun shifting toward unconventional long-term alternatives.

One such concept under early exploration is the relocation of data center operations, positioning space as a potential future frontier for digital infrastructure.

While still largely theoretical, the idea has gained visibility among technology leaders and AI companies, amid broader debates around sustainable computing and infrastructure scalability, acting as an expansion pathway in the global data center market.

The Case for Off-Earth Computing

Interest in space-based data centers is not driven by a single factor, but by a convergence of structural pressures facing terrestrial infrastructure. As AI workloads grow and sustainability constraints tighten, stakeholders are exploring whether relocating selected compute functions beyond Earth could alleviate long-term bottlenecks. The core drivers of interest fall into four broad categories:

Environmental Considerations

Potential reduction in land use, freshwater consumption, and grid dependency compared to large terrestrial facilities, and the opportunity to lower lifecycle emissions by relying primarily on direct solar energy

Energy Availability and Efficiency

Growing constraints on Earth-based data centers including limited grid capacity, land availability, and local resistance alongside high costs for backup power, like extensive diesel generators needed for AI expansion are driving interest in space-based alternatives. Orbiting solar arrays offer stable, continuous power without atmospheric interference, reducing reliance on costly energy storage. These structural pressures encourage exploration of space computing to decouple future digital growth from terrestrial energy and land limitations.

Operational Advantages

In-orbit data processing reduces the need to downlink large volumes of raw data for satellite-based applications and for Earth observation, navigation, and defense analytics.

Security and Resilience

Physical insulation from terrestrial risks such as natural disasters, grid failures, radiation, local disruption, and geopolitics

Understanding Data Centers in Space

Data centers in space refer to computational infrastructure designed to store and process data in orbit or on the lunar surface, rather than within terrestrial facilities. Current concepts generally fall into three architectural models.

Low Earth Orbit Data Centers

The first involves orbital edge data centers, a subset of Low Earth Orbit (LEO) compute structures in which satellites are equipped with onboard sensors.

Most proposed data centers in space architectures focus on Low Earth Orbit (LEO), reflecting a trade-off between accessibility, power availability, and communication latency. LEO deployments benefit from shorter launch times, lower propulsion requirements, and reduced signal delay, making them suitable for data processing tasks closely linked to satellite constellations.

While several technology firms and data centers in space startups have explored LEO-based compute concepts through research initiatives and prototype designs, no hyperscaler has publicly committed to a commercial orbital data center constellation as of early 2026.

Nonetheless, LEO remains the most frequently cited orbital regime due to its relative maturity and familiarity with other satellite systems.

Lunar Surface Data Centers

The second model envisions off-earth cloud data centers, consisting of an alternative and more radical approach that involves placing data infrastructure on the lunar surface, as part of early-stage demonstrations of off-Earth data storage.

The Moon offers unique advantages, including long-term physical stability, predictable thermal cycles, and geopolitical neutrality. Proposals to locate hardware within lunar lava tubes aim to mitigate radiation exposure, micrometeorite risk, and extreme temperature swings, concerns that parallel current thermal and physical resilience challenges.

This approach faces substantial technical hurdles, including power transmission during the lunar night and the absence of maintenance infrastructure, positioning it firmly as a long-horizon concept rather than a near-term deployment model.

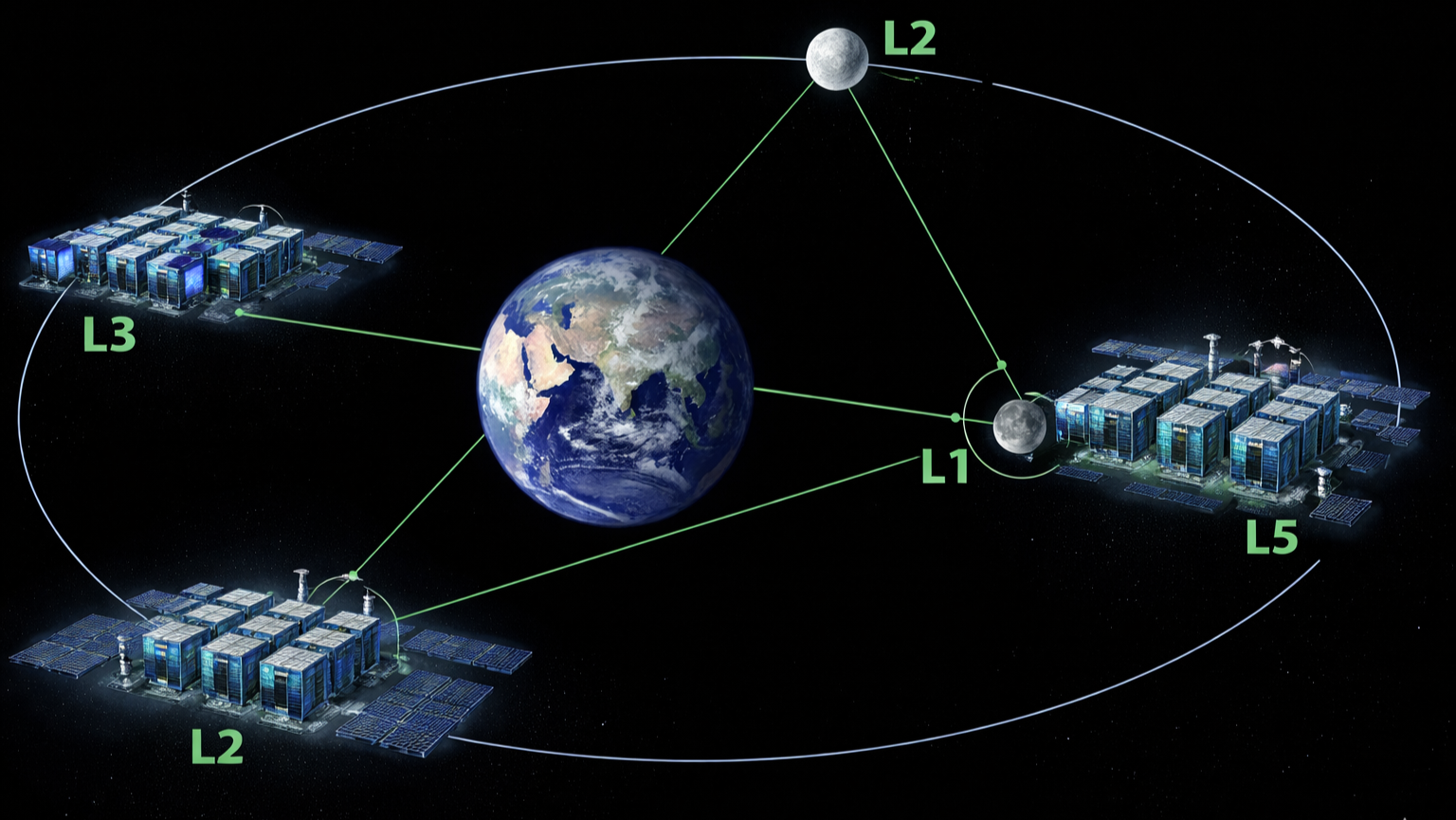

Earth–Moon Lagrange Point

A third category of proposals considers placing computational infrastructure near the Earth–Moon Lagrange Point L1 (EML1), where gravitational forces between the Earth and Moon partially balance.

This region offers continuous line-of-sight communication with Earth and relative orbital predictability, which could support persistent data relay and processing functions.

While EML1 does not provide a perfectly stable orbit and still requires active station-keeping, it is often viewed as a strategic midpoint between Earth-centric and lunar-centric architectures. However, no operational data infrastructure has been deployed at EML1 as of yet.

Engineering Foundations of Space-Based Data Centers

From an engineering perspective, these systems are typically proposed to operate using high-intensity solar power, taking advantage of uninterrupted solar exposure in orbit. Thermal management relies on radiative cooling, where waste heat is dissipated through large radiator surfaces into space.

While the vacuum environment eliminates convective heat transfer, it does not simplify the mechanism of data center cooling in space, making thermal control one of the most significant technical challenges, arguably more complex than those faced on Earth today by the global data center liquid cooling market.

Despite their futuristic appeal, data centers in space face substantial economic, operational, and regulatory barriers. Launch costs, radiation exposure, hardware servicing limitations, latency considerations, and unresolved governance frameworks all constrain near-term feasibility.

As of early 2026, space-based data centers remain experimental and exploratory in nature. They are best understood as a long-term research direction rather than a deployable solution to current Earth-based data center constraints.

Key Projects and Early Experiments

Project Suncatcher

It is a conceptual space-based computing initiative often associated with research and patent activity linked to Google’s advanced infrastructure and AI teams, rather than a formally announced commercial program.

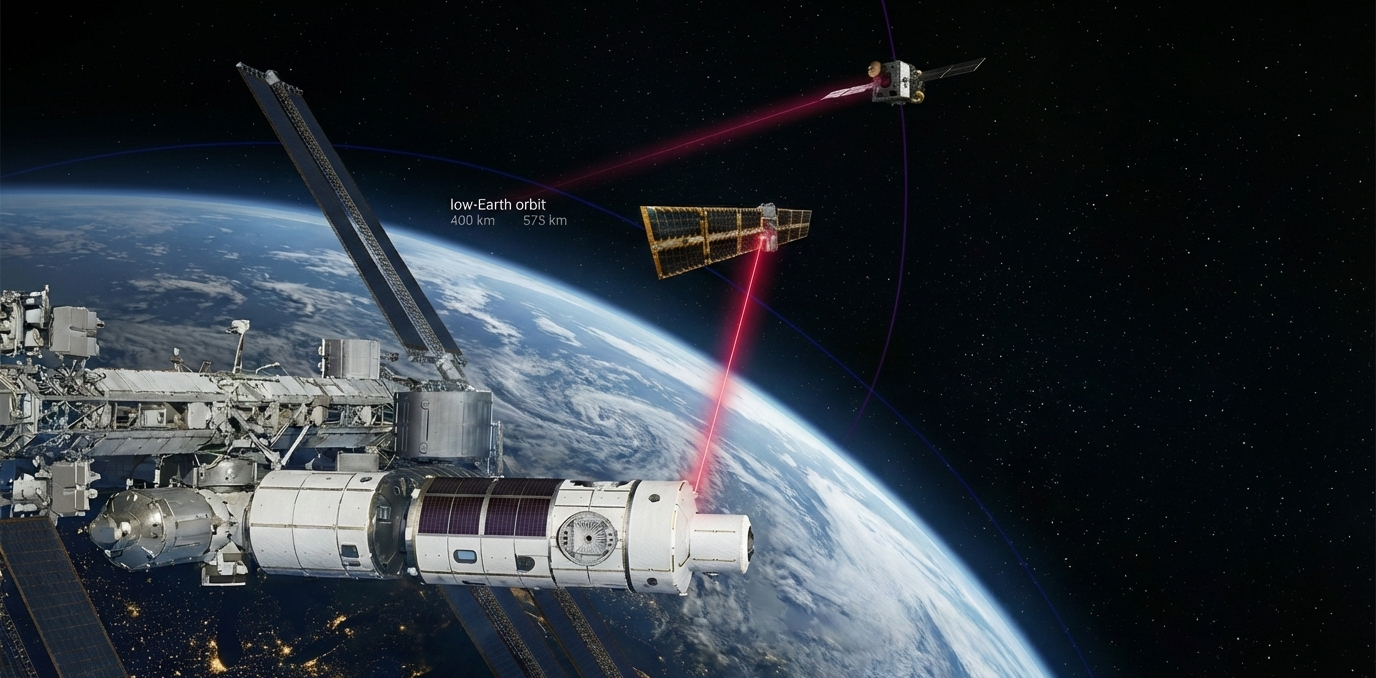

The concept envisions compact constellations of solar-powered satellites equipped with specialized AI accelerators frequently referenced as TPU-class hardware, interconnected through free-space optical (laser) communication links. The proposed architecture is designed to operate in Low Earth Orbit (LEO), a regime that offers lower launch costs, reduced latency, and strong solar exposure compared to higher orbits.

By utilizing near-continuous sunlight and optical inter-satellite links, the concept aims to explore how distributed orbital compute could scale efficiently while minimizing reliance on terrestrial power grids, land, and water resources. Importantly, Project Suncatcher remains exploratory in nature.

Nonetheless, the idea is significant because it illustrates how hyperscalers are theoretically extending cloud and AI infrastructure paradigms into space, signaling long-term interest in orbital computing as a potential complement to Earth-based data centers rather than an immediate replacement.

No deployment timelines or operational satellites have been publicly confirmed as of early 2026. Even as a concept, Project Suncatcher highlights how future data center innovation may be shaped by constraints on terrestrial resources, pushing large technology players to investigate radically new infrastructure models beyond Earth.

Starcloud

It is an early-stage space-based data center startup, and Starcloud-1 is its flagship project exploring space-based cloud computing as a complement to terrestrial data centers. The company raised approximately USD 10 million in seed funding and is part of the NVIDIA Inception program, positioning it within the broader AI and high-performance computing ecosystem.

Starcloud’s near-term focus is a satellite-based technology demonstration, aimed at validating in-orbit compute capabilities rather than deploying a full-scale data center. In November 2025, Starcloud launched its first satellite, Starcloud-1, into space, which has an Nvidia H100, which is a 100x more powerful GPU than has ever been operated in space before.

With this, they became the first entity to train an LLM in space and the first to run a version of Gemini in space. Starcloud will soon also run high-powered inference on Capella SAR data on orbit for the first time.

While commercial-scale deployment remains a long-term objective, this data center in a space company’s approach highlights the industry’s shift from conceptual discussions toward practical, incremental experimentation, making it a noteworthy example of how space-based data center concepts are beginning to move from theory to early validation.

Challenges

Technical Constraints

For data centers in space, cooling in the absence of an atmosphere is a challenge because we cannot rely on convective or evaporative cooling, leaving thermal radiation as the only primary mechanism for heat rejection.

As computational density increases, the required radiator surface area scales non-linearly, meaning a gigawatt-class orbital data center could require radiators extending over multiple square kilometers.

Beyond sheer size, these radiators must be lightweight, micrometeoroid-resistant, and precisely oriented to avoid solar heating, while remaining structurally stable during orbital maneuvers.

Orbital data centers operate in a radiation environment characterized by galactic cosmic rays, solar particle events, and trapped radiation belts, all of which can induce bit flips, logic errors, and cumulative semiconductor damage.

Unlike terrestrial facilities, where faulty hardware can be rapidly replaced, orbital platforms must tolerate prolonged exposure with minimal physical intervention.

While advanced chips such as Google’s Trillium TPUs and other space-hardened processors demonstrate improved resistance through redundancy and error-correction techniques, radiation hardening typically comes at the cost of performance, power efficiency, or design flexibility.

The long-term trade-off between computational efficiency and survivability remains a critical unresolved challenge.

Economic Reality

Although declining launch costs and reusable rockets are improving access to orbit, the economics of orbital data centers extend far beyond launch expenses alone.

Designing space-qualified hardware, incorporating radiation shielding, redundancy, and autonomous fault management systems, dramatically inflates upfront capital costs compared to terrestrial data centers.

Additionally, operational expenditures remain structurally higher due to limited repair options, reliance on robotic servicing, and the need for overengineering to ensure reliability.

Even under optimistic assumptions, the total cost of ownership for orbital compute infrastructure is likely to remain multiples higher than ground-based deployments, confining its viability to highly specialized, high-value use cases rather than mainstream cloud computing.

Recent Developments and Policy Signals

Recent institutional and experimental developments suggest growing long-term interest in off-Earth data infrastructure.

The European Union’s ASCEND feasibility study highlighted the potential role of orbital data centers in supporting 2050 carbon neutrality goals, framing them as a strategic research pathway rather than a near-term commercial solution.

Meanwhile, lunar data storage experiments, such as those conducted by Lonestar Data Holdings aboard Intuitive Machines landers, have demonstrated that basic off-Earth data storage is technically feasible, laying early groundwork for future lunar operations.

Conclusion

As AI workloads scale, Earth-based data centers face mounting pressure from grid capacity limits, water-intensive cooling requirements, land-use opposition, and carbon reduction mandates, challenges that are becoming particularly visible in mature and fast-growing hubs such as the US data centers market and the India data center market.

Space-based data centers are increasingly discussed as a long-term response to the escalating energy, environmental, and spatial constraints associated with terrestrial AI infrastructure. While orbital edge computing has already demonstrated practical value for satellite-native applications, extending this model to large-scale, cloud-style data centers introduces profound technical and economic challenges.

Rather than replacing Earth-based infrastructure, space-based data centers are more likely to evolve as a complementary layer supporting space-native workloads, mission-critical data storage, and future lunar and deep-space operations.

Over time, they could form part of the foundational digital infrastructure of an emerging off-Earth economy, enabling scientific research, autonomous systems, and sustained human activity beyond Earth.

Viewed through this lens, data centers in space are not a disruptive alternative to terrestrial computing, but a strategic enabler whose relevance could grow alongside humanity’s expanding presence in space.

Discover Data Center Projects & Tenders Data before your Competitors Do!

Unlock Global Data Center Opportunities! Introducing Blackridge Research's Global Project Tracking (GPT) platform:

Instant access to the latest Global Data Center Facility Projects

Comprehensive insights from planning to completion

Essential details: scope, CapEx, timelines, and key contacts

User-friendly interface for quick opportunity identification

Want more data center construction insights, including planned projects, active companies, and key decision-makers? Get started with our project tracker demo today.

Why Blackridge’s Global Projects Tracker?

Blackridge’s Global Projects Tracker covers projects from 150 countries and is updated with real-time, accurate, and authentic project developments. By subscribing to our Indian Data Center projects database you can get access to key contact details of ongoing and upcoming projects, project timelines and overviews, and regular alerts on project developments, all served to you through an easy-to-use interface.

Leave a Comment

We love hearing from our readers and value your feedback. If you have any questions or comments about our content, feel free to leave a comment below.

We read every comment and do our best to respond to them all.